Note

This is a community plugin, an external project maintained by its respective author. Community plugins are not part of FiftyOne core and may change independently. Please review each plugin’s documentation and license before use.

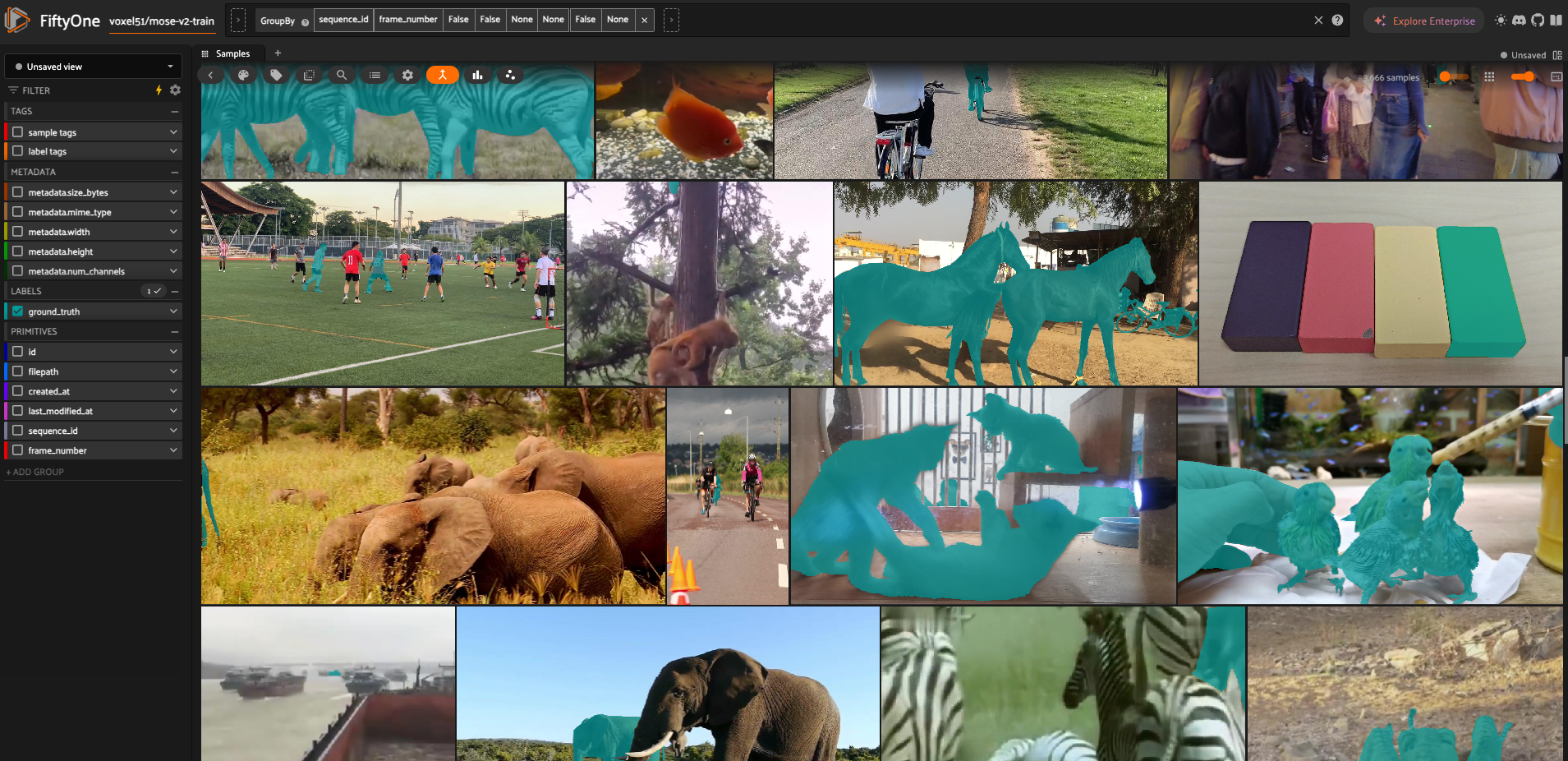

mose-v2#

A FiftyOne remote zoo dataset integration for MOSEv2, a large-scale video object segmentation benchmark: thousands of videos, instance masks, and diverse real-world conditions (occlusion, small objects, weather, low light, camouflage, etc.). See the project site and upstream repo for the full benchmark description.

Source and citation#

Website: mose.video

GitHub: MOSEv2

Hugging Face (dataset card): FudanCVL/MOSEv2

License: Original MOVEv2 terms: CC BY-NC-SA 4.0

Citation: See also other related citations from the official MOSEv2 website.

@article{MOSEv2,

title={{MOSEv2}: A More Challenging Dataset for Video Object Segmentation in Complex Scenes},

author={Ding, Henghui and Ying, Kaining and Liu, Chang and He, Shuting and Jiang, Xudong and Jiang, Yu-Gang and Torr, Philip HS and Bai, Song},

journal={arXiv preprint arXiv:2508.05630},

year={2025}

}

Quick start#

Installation

pip install fiftyone

pip install gdown # required for Google Drive download; see also requirements.txt

Load via the FiftyOne Dataset Zoo

import fiftyone as fo

import fiftyone.zoo as foz

dataset = foz.load_zoo_dataset(

"https://github.com/voxel51/mose-v2",

split="train", # or "validation"

max_samples=1000, # optional, for quicker exploration

)

session = fo.launch_app(dataset)

# For a dynamic Grouped view

grouped_view = dataset.group_by("sequence_id", order_by="frame_number")

Notes:#

Downloads train and validation archives from Google Drive (file IDs are in

__init__.pyasDRIVE_FILE_IDS).Extracts

train/andvalid/under the FiftyOne-managed dataset directory. A symlinkvalidation→validis created when needed so split names match FiftyOne’s expectations.

dataset_dir/

train/

JPEGImages/<sequence_name>/{00000,00001,...}.jpg

Annotations/<sequence_name>/{00000,00001,...}.png

valid/

JPEGImages/<sequence_name>/{00000,00001,...}.jpg

Annotations/<sequence_name>/00000.png

Registers one sample per video frame. Segmentation is stored as an indexed PNG per frame (

ground_truth:fo.Segmentationwithmask_path).Annotation masks are 8-bit indexed PNGs: pixel value

0is background; valueNis object instanceN.

Sample fields#

Field |

Role |

|---|---|

|

Path to the JPEG frame |

|

Video sequence name |

|

Zero-based frame index |

|

Split and sequence (e.g. |

|

|

Statistics#

Split |

Sequences |

Total Samples |

Annotated Samples |

|---|---|---|---|

train |

3,666 |

311,843 |

311,843 |

validation |

433 |

66,526 |

433 (first frame only) |

Visualize#

Each image is tagged with its split and with its sequence name — frames that share a sequence_id belong to the same clip.

For a video-like browser in the App, use a dynamic grouped view — one group per sequence, frames ordered by frame_number.